|

4/19/2023 0 Comments Cpuinfo rose

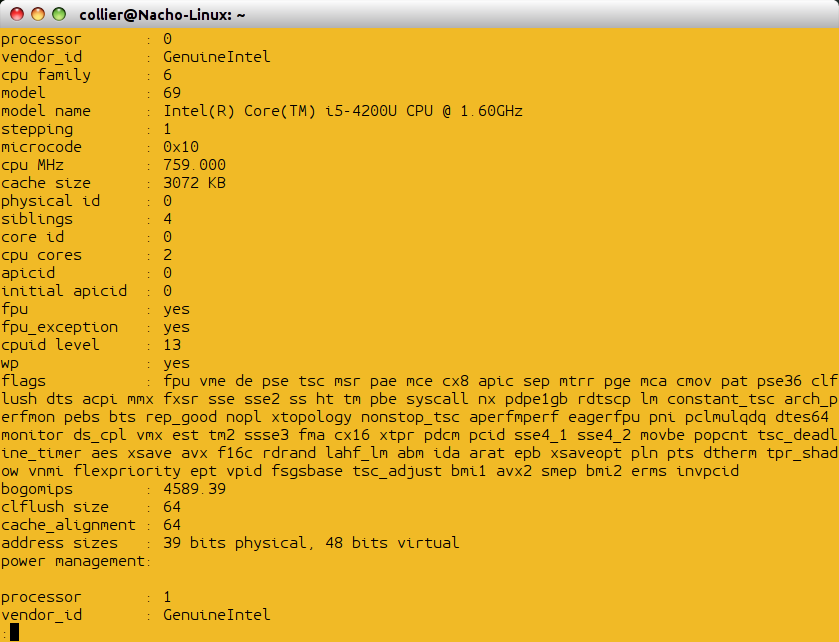

Reading and writing to memory attached to a different processor is both slower and consumes bandwidth across the interprocessor network. When a process is running on a specific processor, reading and writing to memory directly attached to that processor is always fastest. “PU” stands for “processing unit” or hardware thread (“hyperthreads” in Intel processors). Plus, it draws pretty pictures! Managers love pretty pictures (Figure 1).įigure 1: Sample output showing a two-socket, eight-core server with 16GB of RAM. The open source Portable Hardware Locality project, a sub-project of the larger Open MPI community, is a set of command-line tools and API functions that allows system administrators and C programmers to examine the NUMA topology and to provide details about each processor and memory type in a server. The processors are, in turn, connected by a high-speed network (e.g., QPI on Intel-based servers and Hypertransport on AMD-based servers). Most modern commodity servers have a non-uniform memory architecture (NUMA), meaning that the RAM is distributed between the processors on the machine. But are you getting all the performance you should? Exactly where is all that memory, anyway? Do you know how big your shiny new L1, L2, and 元 caches are, and how they are shared across processor cores and sockets? The new hotness has more (and faster) disks, has four times the amount of RAM, and is generally all around mo’bettah. You just replaced your creaky, old server with a shiny, modern server.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed